- Blog

- Geocoding Large Datasets: Best Practices for Millions of Addresses

Best Practices for Millions of Addresses

Geocoding a handful of addresses is straightforward. Geocoding millions of them is an entirely different challenge. At scale, small inefficiencies, data issues, and process gaps quickly multiply into performance bottlenecks and accuracy problems. Organizations working with large datasets need geocoding workflows that are designed for volume from the start.

Successfully geocoding millions of addresses requires more than raw processing power. It demands disciplined data preparation, thoughtful batching strategies, and clear quality controls. When handled correctly, large-scale geocoding becomes a reliable foundation for analytics, planning, and operational systems.

Start with Clean, Standardized Inputs

Input quality has an outsized impact when geocoding at scale. Inconsistent formatting, missing fields, and duplicated records create unnecessary ambiguity that slows processing and reduces accuracy. These issues are manageable in small datasets but become costly when repeated millions of times.

Before geocoding begins, address data should be standardized into consistent fields and validated for completeness. Removing obvious errors and normalizing formats upfront reduces downstream reprocessing. Clean inputs are one of the most effective ways to improve large-scale geocoding results.

Pro Tip: When geocoding millions of addresses, process design matters as much as technology. A well-structured workflow often delivers bigger gains than raw speed alone.

Use Batch Processing Strategically

Batch geocoding is essential for handling large address volumes efficiently. Processing records in controlled batches helps manage system load and ensures consistent throughput. It also makes it easier to track progress and isolate issues when something goes wrong.

Well-designed batch workflows allow organizations to pause, resume, and retry subsets of data without starting over. This flexibility is critical when working with millions of records. Batch processing turns geocoding into a repeatable, manageable operation rather than a single fragile job.

Monitor Precision and Confidence at Scale

When geocoding large datasets, accuracy cannot be evaluated record by record. Instead, teams should analyze precision levels and confidence scores across the entire dataset. Patterns in low-confidence or low-precision results often reveal systemic data issues.

Monitoring these signals helps teams identify where improvements are needed. It also prevents low-quality results from silently influencing analytics or decisions. At scale, confidence metrics are as important as the coordinates themselves.

Design for Performance and Throughput

Large-scale geocoding places sustained demands on processing systems. Workflows must be designed to handle high throughput without degrading performance or accuracy. This includes managing request rates, parallel processing, and system resources effectively.

Performance considerations should be addressed early in the process. Waiting until datasets grow can lead to disruptive rework. Scalable systems ensure geocoding remains fast and reliable as data volumes increase.

Plan for Incremental Updates, Not One-Time Jobs

Large datasets rarely stay static. New records are added, existing addresses change, and corrections are made over time. Treating geocoding as a one-time task leads to outdated results and inconsistent data quality.

Instead, workflows should support incremental geocoding. This allows teams to process new or changed records without reprocessing entire datasets. Incremental updates keep location data current while minimizing unnecessary work.

Handle Edge Cases Explicitly

At scale, even rare edge cases appear frequently. International addresses, rural locations, new developments, and nonstandard formats all require special handling. Ignoring these cases leads to clusters of low-quality results.

Identifying and categorizing edge cases allows teams to apply targeted solutions. This may include separate processing rules or additional validation steps. Explicitly handling edge cases improves overall dataset quality.

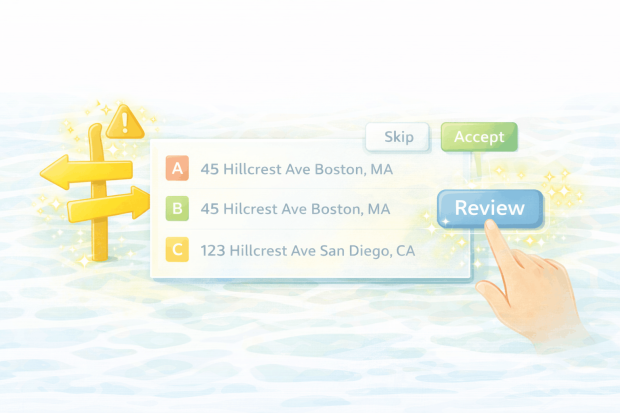

Build Quality Checks into the Workflow

Quality assurance must be automated when working with millions of records. Manual review is impractical and unsustainable. Instead, workflows should include checks for precision thresholds, confidence scores, and unexpected geographic patterns.

These checks help detect issues early and prevent bad data from propagating through downstream systems. Quality controls are most effective when they are continuous rather than reactive.

Why Scale Changes Everything

Geocoding at scale exposes weaknesses that may not be visible in smaller datasets. Processes that work for thousands of records often fail when applied to millions. Recognizing this shift is key to designing robust systems.

Organizations that plan for scale from the beginning are better positioned to use location data confidently. Scalable geocoding supports growth without sacrificing accuracy or reliability.

Building Reliable Large-Scale Geocoding Systems

Geocoding large datasets is a test of both data discipline and system design. Clean inputs, batch processing, performance planning, and ongoing quality monitoring all play critical roles. Together, they create workflows that can handle growth without constant intervention.

When large-scale geocoding is done well, location data becomes a stable asset rather than a recurring problem. That stability enables organizations to scale analytics, planning, and operations with confidence.